Exploring the pros and cons of serverless architecture reveals how it's reshaping the way companies deploy and manage applications. This cloud computing model removes the need to manage servers, allowing teams to focus on building and delivering product features instead of handling infrastructure.

Understanding the benefits of serverless architecture and its trade-offs is essential for teams choosing the right cloud approach.

In practice, serverless architecture is most often chosen in scenarios where speed, flexibility, and cost control are critical. It is commonly used for MVP development, event-driven systems, and applications with unpredictable or burst traffic. In these cases, teams can launch faster, scale automatically, and avoid upfront infrastructure investments.

However, serverless is not a universal solution. Its effectiveness depends on workload type, system architecture, and long-term scaling strategy. In some cases, it can introduce performance limitations, higher costs at scale, or reduced control over the environment.

This article is for CTOs, founders, and product teams who are evaluating serverless architecture and need a clear, practical understanding of when it makes sense to use it

What is Serverless Architecture?

Serverless architecture is a cloud computing model where the cloud provider manages the underlying infrastructure, allowing development teams to focus entirely on application logic instead of server management.

Despite its name, serverless does not mean that servers do not exist. It means that infrastructure provisioning, scaling, and maintenance are handled by providers such as AWS, Google Cloud, or Microsoft Azure. Developers deploy code in the form of functions, which are executed only when needed.

This model is often referred to as Function as a Service (FaaS) and is commonly used in modern, cloud-native applications that require flexibility, scalability, and faster delivery cycles.

These characteristics explain why serverless is widely adopted in modern product development, especially for teams that prioritize speed, scalability, and reduced operational overhead.

How Serverless Architecture Works

Serverless applications are built around events and short-lived function executions. In real-world projects, serverless functions are typically triggered by:

- API requests (HTTP endpoints)

- database changes (e.g., new record, update)

- file uploads (e.g., images, documents)

- scheduled jobs (cron tasks)

- message queues and streaming systems

Once a trigger occurs, the flow looks like this:

- The event is received by the cloud provider

- A function is invoked in response to that event

- The function runs in an isolated environment

- It processes the request and returns a result

- The execution environment is terminated after completion

This is known as an ephemeral runtime — functions do not run continuously but are created on demand and destroyed after execution. From a business perspective, this directly impacts cost and scalability. Teams are billed only for actual execution time, typically measured in milliseconds. However, billing can become less predictable if:

- functions are triggered too frequently

- execution time is not optimized

- background processes are poorly designed

Core Characteristics of Serverless Architecture

Serverless architecture is defined by several core principles that shape how systems are designed and operated.

Event-driven architecture

Applications react to events instead of running continuously. In practice, this means systems are composed of small, independent functions triggered by user actions, system changes, or external integrations.

Stateless execution

Each function execution is independent and does not store data between runs. Any required state must be stored externally (e.g., databases, storage services). This improves scalability but introduces additional complexity in managing data and workflows.

Managed infrastructure

The cloud provider handles:

- server provisioning

- scaling

- patching and maintenance

- high availability

However, development teams are still responsible for:

- application logic

- architecture design

- security configurations

- monitoring and cost control

Automatic scaling

Serverless platforms automatically scale functions based on incoming requests. This allows systems to handle traffic spikes without manual intervention. At the same time, uncontrolled scaling can lead to:

- unexpected costs

- hitting concurrency limits

- performance bottlenecks in downstream services (e.g., databases)

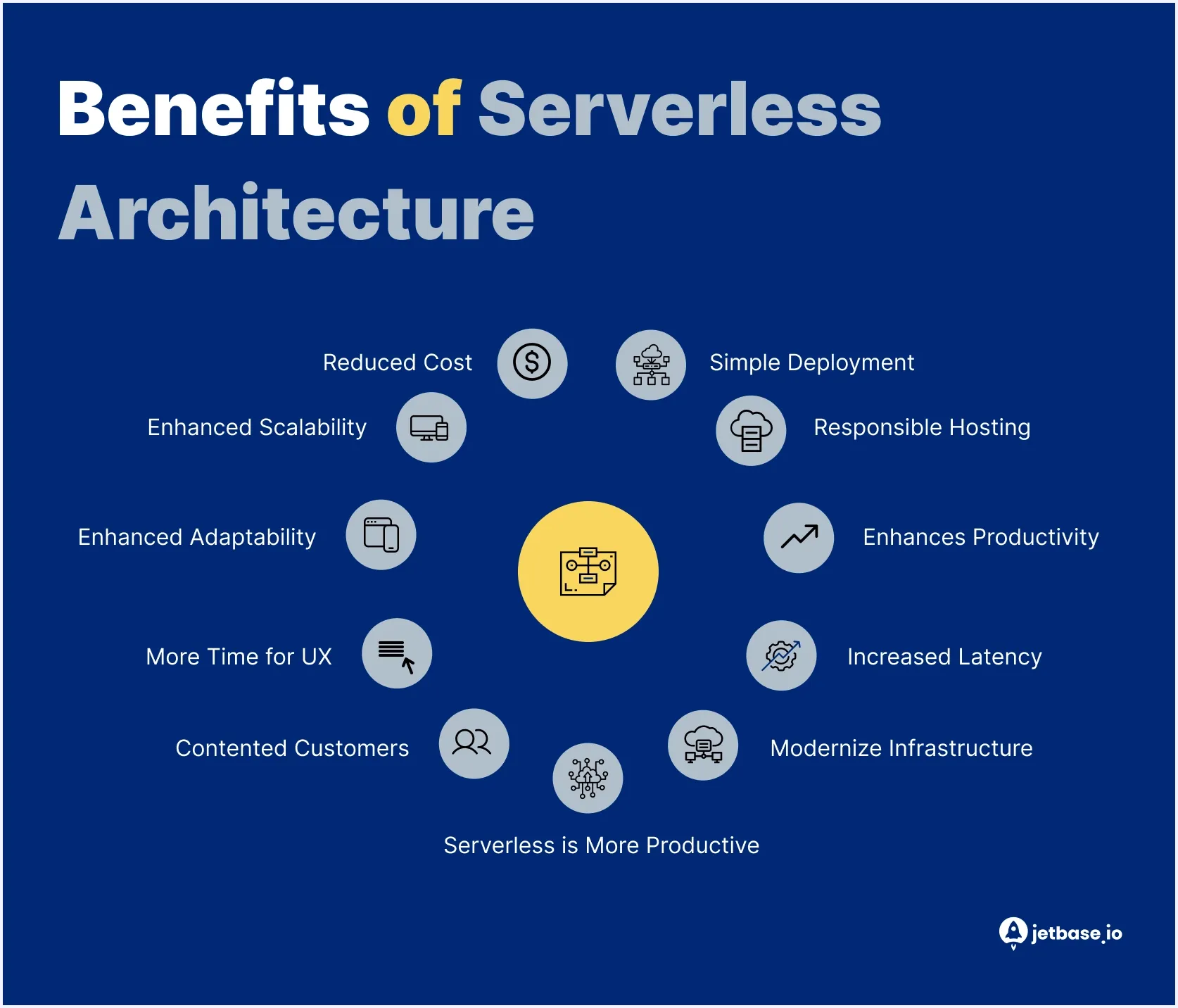

The Benefits of Serverless Architecture

As we explore the advantages and disadvantages of serverless architecture, it's important to understand that its benefits are highly dependent on workload type and system design.

These serverless advantages and benefits of serverless computing become clear when applied in the right scenarios.

Cost-Efficiency

Serverless is often considered cost-effective because it follows a pay-as-you-go model — you only pay for actual execution time instead of provisioning infrastructure in advance.

Cost savings are most noticeable when:

- workloads are irregular or unpredictable

- applications have idle periods

- traffic comes in bursts rather than constant load

In these cases, serverless eliminates the need to maintain always-on infrastructure, reducing wasted resources. This is one of the most important serverless benefits for startups and scalable products.

However, serverless can become more expensive when:

- workloads are long-running or compute-heavy

- functions are triggered very frequently

- execution time is not optimized

In high and stable load scenarios, traditional or container-based architectures may be more cost-efficient.

Enhanced Scalability

One of the key benefits of serverless architecture is automatic scaling. Functions scale up or down based on incoming requests without manual intervention.

In practice, this allows systems to:

- handle sudden traffic spikes

- process large volumes of events in parallel

- maintain performance under variable load

However, scaling is not unlimited. Teams need to consider:

- concurrency limits (maximum number of parallel executions)

- burst limits (how quickly scaling can ramp up)

- downstream bottlenecks (e.g., databases that cannot scale at the same rate)

Without proper architecture, automatic scaling can shift the bottleneck rather than eliminate it.

Faster Time to Market

Serverless architecture reduces the need for infrastructure setup and ongoing DevOps management, allowing teams to focus on building product features.

This has a direct impact on delivery speed:

- no server provisioning or environment configuration

- fewer DevOps dependencies

- simplified deployment workflows

The impact is especially noticeable for:

- startups building MVPs

- small product teams

- projects with limited engineering resources

As a result, teams can launch faster, iterate more frequently, and validate ideas with lower initial investment.

Optimizing Performance Through Scaling

Serverless improves application performance by automatically allocating resources based on demand.

Instead of pre-allocating capacity, the system:

- scales resources up during high load

- scales down during low activity

- ensures consistent response times under variable traffic

This dynamic scaling helps maintain stable performance without manual tuning. However, performance can still be affected by:

- cold starts

- external service dependencies

- inefficient function design

Reduced Operational Complexity

Serverless significantly reduces the operational burden on engineering teams by shifting infrastructure responsibilities to the cloud provider.

This eliminates the need for:

- server provisioning and maintenance

- patching and updates

- infrastructure scaling management

As a result:

- teams require less DevOps involvement

- smaller teams can manage more complex systems

- engineering effort shifts toward product development

However, teams still need to manage:

- application architecture

- monitoring and observability

- cost control

Improved Reliability

Serverless platforms are built on highly available, distributed infrastructure managed by cloud providers.

In practice, reliability is achieved through:

- multi-availability zone (Multi-AZ) redundancy

- automatic failover mechanisms

- provider-managed fault tolerance

If an execution environment fails, the platform automatically reroutes requests and re-invokes functions, minimizing downtime. However, reliability also depends on:

- external dependencies (databases, APIs)

- proper error handling and retries

- system architecture design

Reduced Latency (Context-Dependent)

Serverless can reduce latency in specific scenarios, particularly when combined with edge computing.

Latency improvements are possible when:

- functions are executed closer to end users (edge locations)

- requests are processed without centralized infrastructure delays

However, this is not a universal benefit. Latency may increase due to:

- cold starts

- network dependencies

- centralized service integrations

As a result, serverless improves latency only when architecture is designed to support edge execution and minimize dependencies.

Limitations and Challenges of Serverless Architecture

Understanding the pros and cons of serverless architecture and overall serverless pros and cons requires looking beyond its advantages. While serverless simplifies infrastructure management and scaling, it also introduces architectural, operational, and cost-related challenges that teams need to consider before adoption.

These limitations show that serverless benefits come with trade-offs that need to be carefully evaluated.

Vendor Lock-In

Serverless solutions are tightly coupled with cloud provider ecosystems (e.g., AWS Lambda, Azure Functions, Google Cloud Functions). This creates a dependency on provider-specific services, APIs, and configurations. In practice, this makes migration between providers complex and costly. Common mitigation strategies include:

- using Infrastructure as Code (IaC) (e.g., Terraform) to standardize deployments

- abstracting business logic from provider-specific services

- designing systems with partial portability in mind

However, fully avoiding lock-in often introduces additional complexity, especially in multi-cloud setups, which require more engineering effort and coordination.

Performance Issues

Serverless applications may experience performance variability, particularly due to cold starts. A cold start occurs when a function is invoked after a period of inactivity, requiring the platform to initialize a new execution environment. The delay can vary depending on:

- runtime (e.g., Node.js vs Java)

- function size and dependencies

- cloud provider configuration

This can impact latency-sensitive applications. Common mitigation approaches include:

- provisioned concurrency (keeping functions warm)

- optimizing function size and dependencies

- using edge functions where applicable

Monitoring and Debugging

Monitoring serverless systems is more complex than in traditional architectures due to their distributed and ephemeral nature. Challenges include:

- lack of persistent execution environments

- difficulty tracing requests across multiple functions

- limited visibility into runtime behavior

To address this, teams need:

- distributed tracing tools (e.g., AWS X-Ray, OpenTelemetry)

- centralized logging systems

- advanced observability practices

Without proper tooling, identifying performance issues or failures becomes significantly harder.

Limited Control Over the Environment

Serverless platforms abstract away infrastructure, which reduces operational effort but also limits control. Teams typically cannot:

- access the underlying operating system

- customize runtime environments beyond predefined options

- control low-level performance configurations

These constraints can be problematic for:

- applications with specific system dependencies

- performance-critical workloads

- legacy systems requiring custom environments

Complex State Management

Serverless functions are stateless by design, meaning they do not retain data between executions. To manage state, teams must rely on external services such as:

- databases (SQL/NoSQL)

- in-memory stores (e.g., Redis)

- object storage (e.g., S3)

While this enables scalability, it also:

- increases architectural complexity

- introduces additional latency

- requires careful data consistency management

Cost Unpredictability at Scale

Although serverless is cost-efficient for variable workloads, costs can become unpredictable at scale. This typically happens when:

- functions are triggered at high frequency

- execution time is not optimized

- inefficient architecture leads to excessive invocations

Because billing is tied to execution, even small inefficiencies can scale into significant costs under heavy usage.

Execution Time Limits

Serverless platforms impose maximum execution time limits for functions (e.g., minutes depending on provider). This creates constraints for:

- long-running processes

- heavy data processing tasks

- synchronous workflows

To work around this, teams often need to redesign systems using:

- asynchronous workflows

- task queues

- function chaining

Compliance and Security Concerns

In regulated industries (e.g., healthcare, fintech), serverless introduces additional compliance challenges. These include:

- limited control over infrastructure location and configuration

- dependency on provider security practices

- complexity in meeting strict data governance requirements

Organizations must ensure that:

- the cloud provider meets compliance standards (e.g., HIPAA, GDPR)

- proper access control and data handling policies are implemented

Vendor-Imposed Quotas

Cloud providers enforce limits (quotas) on serverless usage, including:

- maximum concurrency

- request rates

- resource allocation per function

These limits are usually sufficient for most applications but can become a bottleneck when:

- systems scale rapidly

- traffic spikes exceed expected thresholds

- quotas are not proactively increased

Without planning, this can lead to throttling and degraded performance.

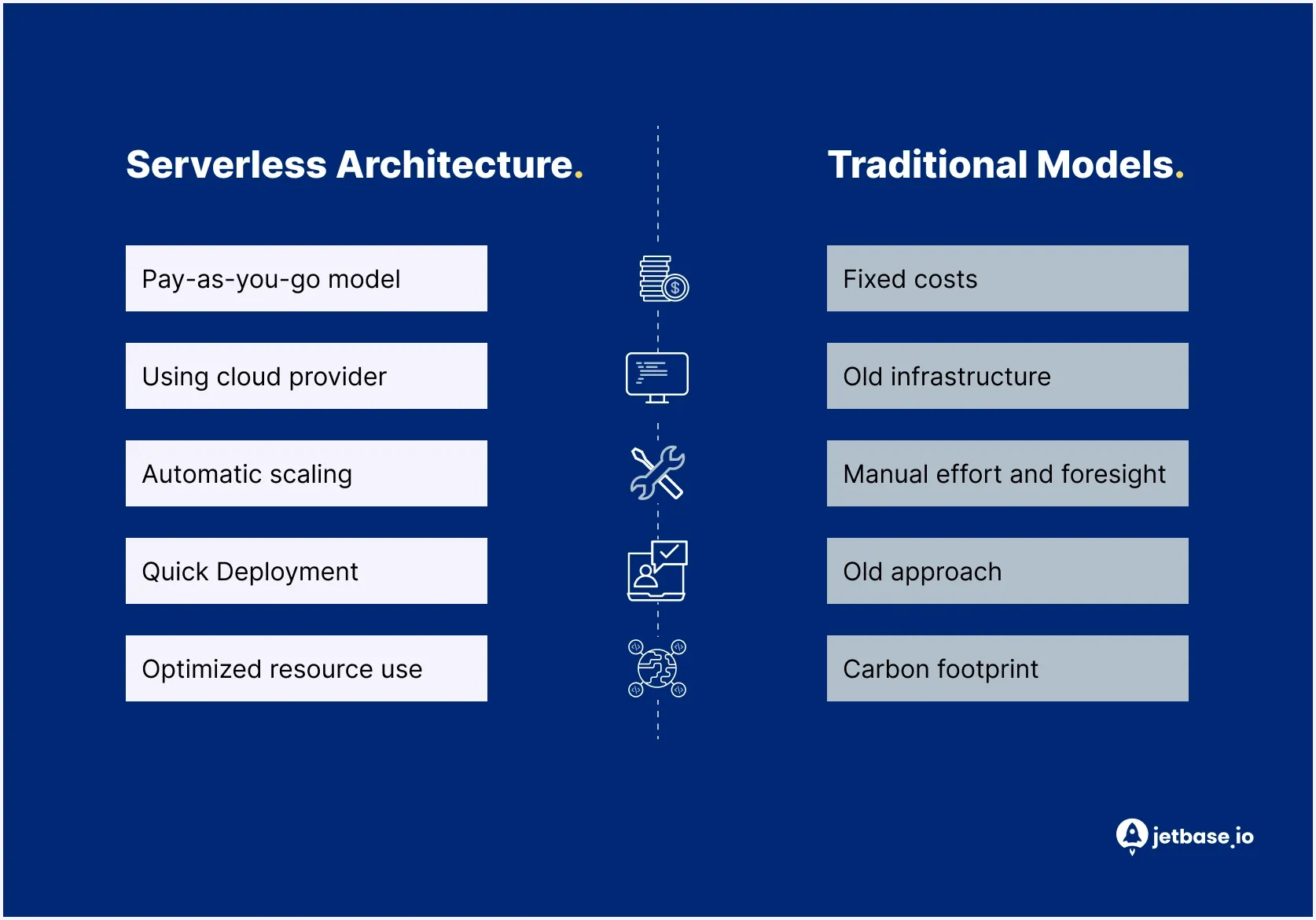

Serverless vs. Traditional Models Analysis

Comparing serverless to traditional (self-managed) infrastructure highlights fundamental differences in how systems are built, scaled, and maintained, and helps better understand serverless architecture benefits in real-world systems. The choice between these approaches depends on workload type, team structure, and long-term cost considerations.

Pricing Model

Traditional infrastructure relies on provisioned resources, where companies pay for allocated capacity regardless of actual usage. This makes costs more predictable but often leads to underutilized resources.

Serverless follows a pay-as-you-go model, where billing is based on actual execution time and the number of requests.

In practice:

- serverless is more cost-efficient for variable or unpredictable workloads

- traditional infrastructure is often more cost-efficient for stable, consistently high workloads

This comparison clearly shows how the benefits of serverless differ depending on workload patterns.

Operational Overhead and Maintenance

In traditional setups, teams are responsible for managing:

- servers and environments

- scaling configurations

- patching and updates

This requires dedicated DevOps effort and ongoing maintenance. Serverless shifts these responsibilities to the cloud provider, eliminating infrastructure management and reducing operational overhead.

As a result:

- smaller teams can manage complex systems

- engineering effort shifts toward product development instead of maintenance

Scalability and Performance

Scaling in traditional infrastructure requires planning and manual configuration. Teams must provision resources in advance and adjust capacity based on expected load. Serverless scales automatically based on incoming requests, allowing systems to handle traffic spikes without manual intervention. However:

- serverless scaling is subject to concurrency and provider limits

- traditional systems offer more predictable performance under constant load

Innovation and Time to Market

Traditional infrastructure often slows down development due to setup complexity, environment configuration, and deployment pipelines. Serverless reduces these barriers by removing infrastructure dependencies. In practice:

- teams can deploy faster and iterate more frequently

- startups and small teams benefit the most from reduced setup time

This makes serverless particularly effective for MVP development and rapid experimentation.

Environmental Impact

Traditional infrastructure often leads to over-provisioning, where unused resources still consume energy. Serverless optimizes resource usage by running code only when needed, which can reduce overall energy consumption. However, the environmental impact ultimately depends on workload patterns and system design.

When to Choose Each Approach

Choose serverless when:

- you are building an MVP or early-stage product

- workloads are event-driven or unpredictable

- speed of development is a priority

- you want to minimize DevOps overhead

Choose traditional (self-managed or provisioned infrastructure) when:

- workloads are stable and consistently high

- cost predictability is critical

- you need full control over infrastructure and runtime

- performance consistency is a priority

Ultimately, evaluating serverless architecture pros and cons depends on balancing cost, scalability, and operational control.

Serverless vs. Microservices: Question of Choice?

The choice between serverless and microservices is often misunderstood. These are not competing approaches but concepts that operate at different levels. Understanding serverless architecture pros and cons is essential when deciding how to combine these approaches in real-world systems.

Microservices are an architectural pattern — a way of structuring an application as a collection of small, independent services. Serverless, on the other hand, is an execution model — a way to run and scale those services without managing infrastructure.

In practice, serverless can be used to implement microservices. Each function can represent a small, independent service that responds to specific events, making it easier to build modular and scalable systems. However, microservices do not require serverless. Teams often run microservices on:

- containers (e.g., Kubernetes)

- virtual machines

- self-managed or provisioned infrastructure

When to Combine Serverless and Microservices

Combining these approaches works well when:

- services are event-driven and loosely coupled

- workloads are variable or unpredictable

- teams want to reduce infrastructure management

In this setup, serverless simplifies deployment and scaling, while microservices provide clear separation of concerns.

When to Use Microservices Without Serverless

Running microservices on provisioned infrastructure may be a better choice when:

- services are long-running or stateful

- workloads are stable and consistently high

- teams require fine-grained control over runtime and performance

Key Trade-Offs to Consider

While combining serverless with microservices offers flexibility, it also introduces complexity. Teams should consider:

- increased system fragmentation (many small functions/services)

- more complex monitoring and debugging

- reliance on provider-specific services

- potential cost growth with high request volumes

Examples of Serverless Architecture

Serverless architecture is widely used across different types of applications, especially where workloads are event-driven, variable, or require fast deployment.

Below are common real-world use cases that highlight the pros and cons of serverless architecture, showing when it works best and what trade-offs teams should consider.

| Use Case | Problem | Why serverless fits | Risks & Limitations |

|---|---|---|---|

| API Backends | Building scalable APIs requires handling unpredictable traffic, managing infrastructure, and ensuring high availability. | Serverless allows APIs to scale automatically based on incoming requests without pre-provisioning infrastructure. This makes it easier to handle traffic spikes and reduces operational overhead. |

|

| Webhooks and Event Processing | Systems often need to react to external events (e.g., payments, user actions, third-party integrations) in real time. | Serverless functions can be triggered instantly by incoming events, making them ideal for webhook handling and event-driven workflows. |

|

| Scheduled Tasks (Cron Jobs) | Applications often require recurring background jobs such as data cleanup, report generation, or syncing systems. | Serverless platforms support scheduled triggers, allowing teams to run tasks without maintaining dedicated servers. |

|

| Data Transformation Pipelines | Processing large volumes of data (e.g., logs, uploads, analytics events) requires scalable and efficient pipelines. | Serverless enables parallel processing of data streams and events, making it easy to scale pipelines dynamically based on load. |

|

| Startup MVP Development | Startups need to launch quickly with limited resources while avoiding upfront infrastructure investment. | Serverless allows teams to build and deploy MVPs without setting up infrastructure, reducing time to market and initial costs. |

|

The Future of Serverless Computing

Serverless computing is evolving from a niche approach into a core component of modern cloud architectures, reflecting both the advantages and disadvantages of serverless architecture. The focus is shifting toward better performance, more control, and integration with other technologies.

| Trend | What It Means in Practice | Business Impact |

|---|---|---|

| Edge Computing | Functions run closer to end users in distributed locations, reducing the distance between users and processing logic | Lower latency, improved performance for real-time applications, better user experience for global products |

| Serverless Containers | Containerized applications run in a serverless model with automatic scaling and managed infrastructure | Greater control over runtime and dependencies, better support for complex workloads, fewer limitations than standard functions |

| AI and Data Processing | Serverless is used for on-demand inference, event-driven data processing, and automated pipelines | Cost-efficient AI execution, ability to scale data processing dynamically, faster development of data-driven features |

| Hybrid Architectures | Serverless is combined with traditional infrastructure based on workload requirements | Better cost optimization, more flexible architecture design, balance between scalability and control |

In practice, most modern systems combine these approaches rather than relying on serverless alone.

These trends further highlight how serverless architecture pros and cons evolve as the technology matures.

Are You Ready to Migrate to Serverless?

Adopting serverless architecture is not just a technical decision — it requires evaluating workloads, risks, and long-term scalability, as well as understanding the pros and cons of serverless. Before moving to serverless, teams should assess whether their systems and goals align with this model.

Serverless Readiness Checklist

Serverless is a strong fit if most of the following conditions apply:

- workloads are event-driven (APIs, webhooks, background jobs)

- traffic is variable or unpredictable

- the system does not rely on long-running processes

- fast time to market is a priority

- the team wants to reduce DevOps overhead

- architecture can be designed as stateless

If these conditions are not met, serverless may introduce more complexity than value.

Key Risks to Consider

Before adoption, teams should be aware of the most common risks:

- cost growth at scale due to frequent executions

- performance variability (e.g., cold starts)

- vendor lock-in and dependency on provider services

- complex system architecture (especially with many functions)

- limited control over runtime and infrastructure

Understanding these risks early helps avoid costly redesigns later.

Gradual Migration Strategy

A successful transition to serverless should be incremental rather than immediate.

A typical approach includes:

- Identifying low-risk, event-driven components

- Migrating isolated workloads (e.g., background jobs, APIs)

- Monitoring performance, cost, and reliability

- Expanding serverless usage based on results

This reduces risk and allows teams to adapt architecture gradually.

Pilot-First Approach

Instead of full migration, teams should start with a pilot project.

A strong pilot is:

- small in scope but meaningful

- easy to isolate from core systems

- measurable in terms of performance and cost

A successful pilot typically demonstrates:

- reduced infrastructure overhead

- stable performance under load

- predictable cost behavior

When to Avoid Serverless

Serverless may not be the right choice when:

- workloads are long-running or compute-intensive

- traffic is stable and consistently high

- strict control over infrastructure is required

- latency must be fully predictable

In these cases, traditional or hybrid architectures are often more suitable.

Ultimately, the advantages of serverless depend on how well the architecture aligns with your specific product and workload requirements.

If you are considering serverless, JetBase can help you evaluate the right approach and plan a smooth transition.