Quality Assurance (QA) is vital in software development, acting as the gatekeeper to ensure that the final products meet desired standards and are free from errors. Yulia Onischenko, the QA Lead in JetBase, has elucidated how implementing automation in QA processes can significantly curtail costs, by up to 75%, and elevate the quality and efficiency of software delivery.

What is QA?

Quality Assurance (QA) in software development is a systematic process that ensures the quality and reliability of software products. It involves a set of activities intended to monitor the software development process and prevent, detect, and rectify defects. The primary goal of QA is to improve development and test processes so that bugs do not arise during the development.

Manual and Automated Testing — Differences

Deciding the Approach

Yulia emphasizes that a balanced combination of both, depending on project needs, is often the optimal approach. Both of these testing types are important and can’t exist singularly.

Scale and Reusability

Automated tests are more suited for large projects where tests are repetitive and need to be run multiple times, while manual testing is efficient for smaller scales and exploratory testing.

Accuracy and Reliability

Automated testing ensures higher accuracy and reliability in results due to reduced human error, whereas manual testing, although intuitive, is prone to inaccuracies.

Cost and Resource Optimization

While automated testing may have higher upfront costs, it optimizes resources and proves cost-effective in the long run. It is easy for a team to adapt to automation testing, since the number of manual test cases is growing and takes a lot of time.

User Experience Assessment

Manual testing is indispensable for assessing user experience and interface-related aspects, where human insight is crucial, whereas automated testing may fall short in evaluating subjective user experiences.

Example

Let's go over the auto test scenario that mimics a user's journey through a contact form on a JetBase website. Look, how quickly QA can test any features in software development.

Positive Scenario

The first scenario ("Check positive user flow for the contact form") details a successful submission process, where all the required fields are filled out correctly, including a name, email, website, budget selection, service category, detailed message, and a privacy policy agreement. This scenario ends with a confirmation that a success message is received, implying that the form handles data correctly and user feedback is appropriately displayed.

Negative Scenarios

The subsequent scenarios focus on negative testing, where one or more fields are filled incorrectly or not at all, to ensure that the form validation is working as expected. It includes cases like submitting the form without filling in all the required fields, entering an invalid email address, omitting the budget selection, neglecting to choose a service category, and leaving the details field empty. Each scenario is followed by an assertion that checks for the correct error message, ensuring that the form provides necessary feedback to the user, prompting them to correct their input.

The script below is an exemplary case of how automated testing is structured and executed using the Cypress framework, a powerful tool used by us for web application testing.

Feature: Contact form

Description: This test case is checking positive and negative scenarios for the contact form

Background:

Given I go to the "Contact" page

Scenario: Check positive user flow for the contact form

When I type "Elon Musk" into name

And I type "elon.musk@tesla.com" into email

And I type "tesla.com" into website

And I choose "$5000 - $10000" as budget

And I choose "E-commerce" as service

And I type "AI autopilot car with great interface" into details

And I check the privacy

And I click the [Send message] button

Then I receive success message

Scenario: Check negative scenario for the required fields of the contact form

When I type "Elon Musk" into name

And I click the [Send message] button

Then I receive error message "Please fill a valid email!"

When I type "elon.musk@teslacom" into email

And I click the [Send message] button

Then I receive error message "Please fill a valid email!"

When I type "elon.musk@tesla.com" into email

And I type "tesla.com" into website

And I click the [Send message] button

Then I receive error message "Budget range is required!"

When I choose "$5000 - $10000" as budget

And I click the [Send message] button

Then I receive error message "Category range is required!"

When I choose "E-commerce" as service

And I click the [Send message] button

Then I receive error message "Please fill the details field!"

When I type "AI autopilot car with great interface" into details

And I click the [Send message] button

Then I receive error message "Check the terms and conditions!"The script above displays a detailed Gherkin syntax-based test case for a contact form feature on a website, designed to validate both positive and negative scenarios. The structured format of the Gherkin language allows the script to be both technical and user-friendly, making the desired behaviors of the web application clear and testable.

Also, we adhere to the best practice of writing tests independently. There are several advantages to this approach.

Isolation and Modularity

Independent tests can be developed without being reliant on the implementation details of the code. This isolation ensures that tests focus on specific functionalities or units without being affected by changes in other parts of the codebase. It allows for modular development and testing.

Easier Maintenance

When tests are separate from the code, modifications or updates to the codebase are less likely to cause problems in the tests. This independence ensures that changes in code structure or implementation won’t automatically render the tests invalid or obsolete.

Clarity and Readability

Independent tests tend to be clearer and more readable because they focus solely on verifying specific functionalities or units. This clarity makes it easier for developers to understand the purpose of each test case.

Improved Collaboration

Independent tests can be written concurrently by multiple team members, enabling parallel development and testing. This helps in faster development cycles and facilitates collaboration among team members.

Better Debugging

When tests are independent, it's easier to pinpoint the source of failures or bugs. Developers can identify issues more quickly as they know the specific functionality or unit being tested.

Encourages Better Design

Writing tests independently often encourages developers to create code that is more modular, loosely coupled, and easily testable from the start. This can lead to better-designed software overall.

Supports Continuous Integration/Deployment (CI/CD)

Independent tests are well-suited for automated testing processes in CI/CD pipelines. They can be run in parallel, allowing for faster feedback cycles and quicker integration of changes into the main codebase.

Reasons to Start Automated Testing

Superior Quality

Yulia observes that automation minimizes human errors, delivering higher product quality and reliability.

Time and Cost Efficiency

Automated tests run unsupervised, significantly reducing testing time and human resource costs, affirming the potential for a 75% reduction in QA costs.

Enhanced Productivity

Accelerated testing processes via automation enable faster development cycles and free up QA specialists to focus on more complex and critical tasks.

When Should You Shift to Automation?

Shifting to automation is a pivotal decision in the software development lifecycle. Yulia, the QA Lead in JetBase, shares invaluable insights on identifying the right time to integrate automation into the testing process.

Scale and Complexity Increase

Automated testing is pivotal when the scope and intricacy of projects escalate. It’s especially true for extensive projects where the limitations of manual testing become glaringly evident.

When you notice the scale and complexity of a project overgrowing the capacity of manual testing, it's high time to bring automation into play. Automation can meticulously handle the intricate details and vast scope of large projects, ensuring comprehensive coverage and reliability.

Stable Requirements

Yulia suggests the transition to automation when the project requirements are finalized and stabilized.

Automated testing excels in environments with solidified requirements. It reduces the risk of discrepancies and ensures sustained efficiency and reliability in the testing process. It’s imperative to have a clear and stable set of requirements before incorporating automation to avoid unnecessary complications and redundancies.

Repetitive Testing Requirements

Automation is the go-to solution when the project necessitates recurrent testing. It not only assures precision but also conserves invaluable time and resources in the long run.

In scenarios where testing needs to be executed repeatedly, automation is a lifesaver. It eliminates the tediousness of manual repetition and significantly reduces the margin of error, enhancing the overall efficiency of the testing process.

Performance Testing is Crucial

When it comes to assessing software performance under varied and extensive conditions, automated testing is unparalleled. It provides insights that are practically unattainable through manual testing.

Automated testing is indispensable when precise performance evaluation is crucial. It can simulate a myriad of conditions and user interactions to assess how the software performs, providing detailed and reliable insights.

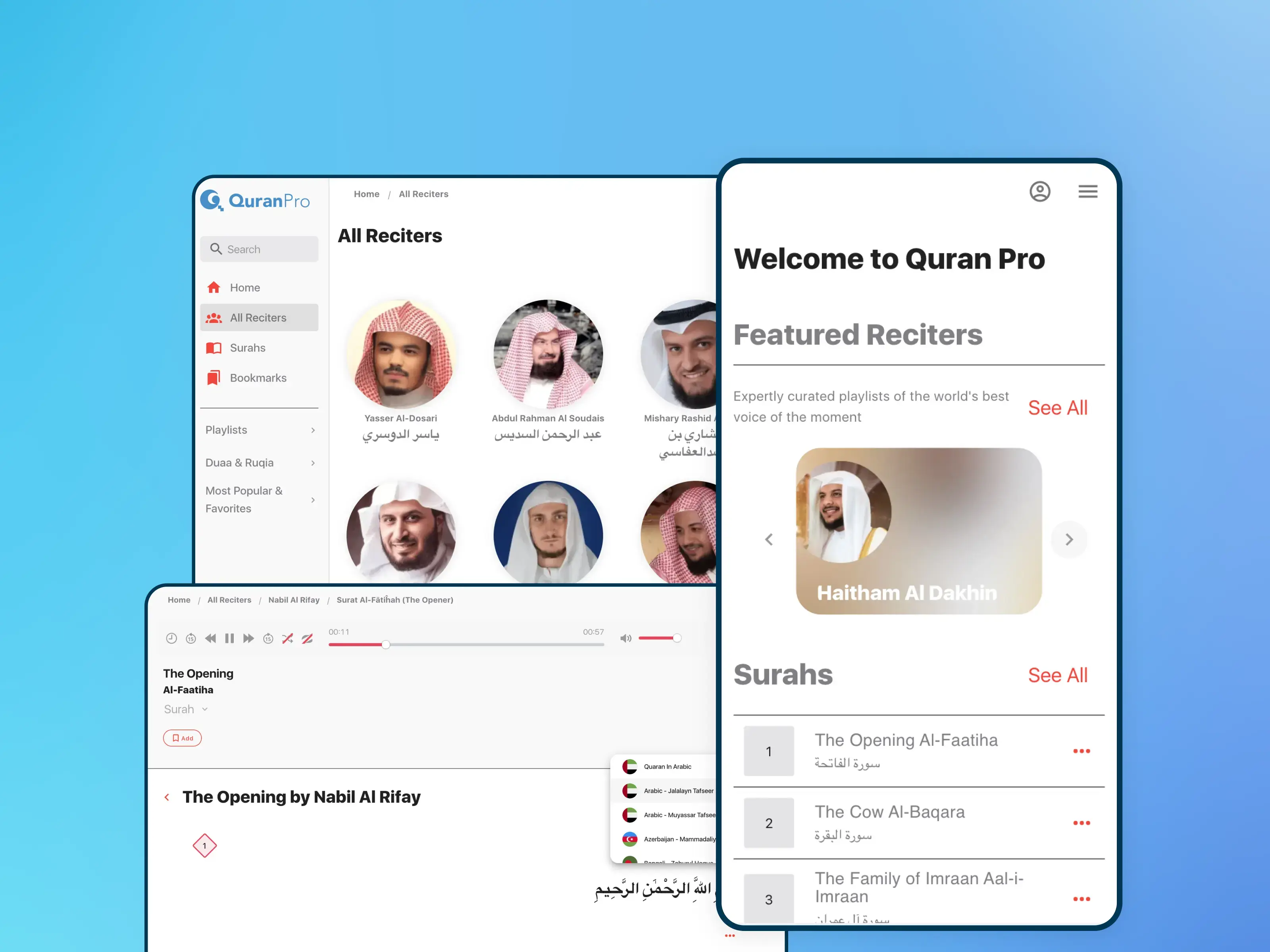

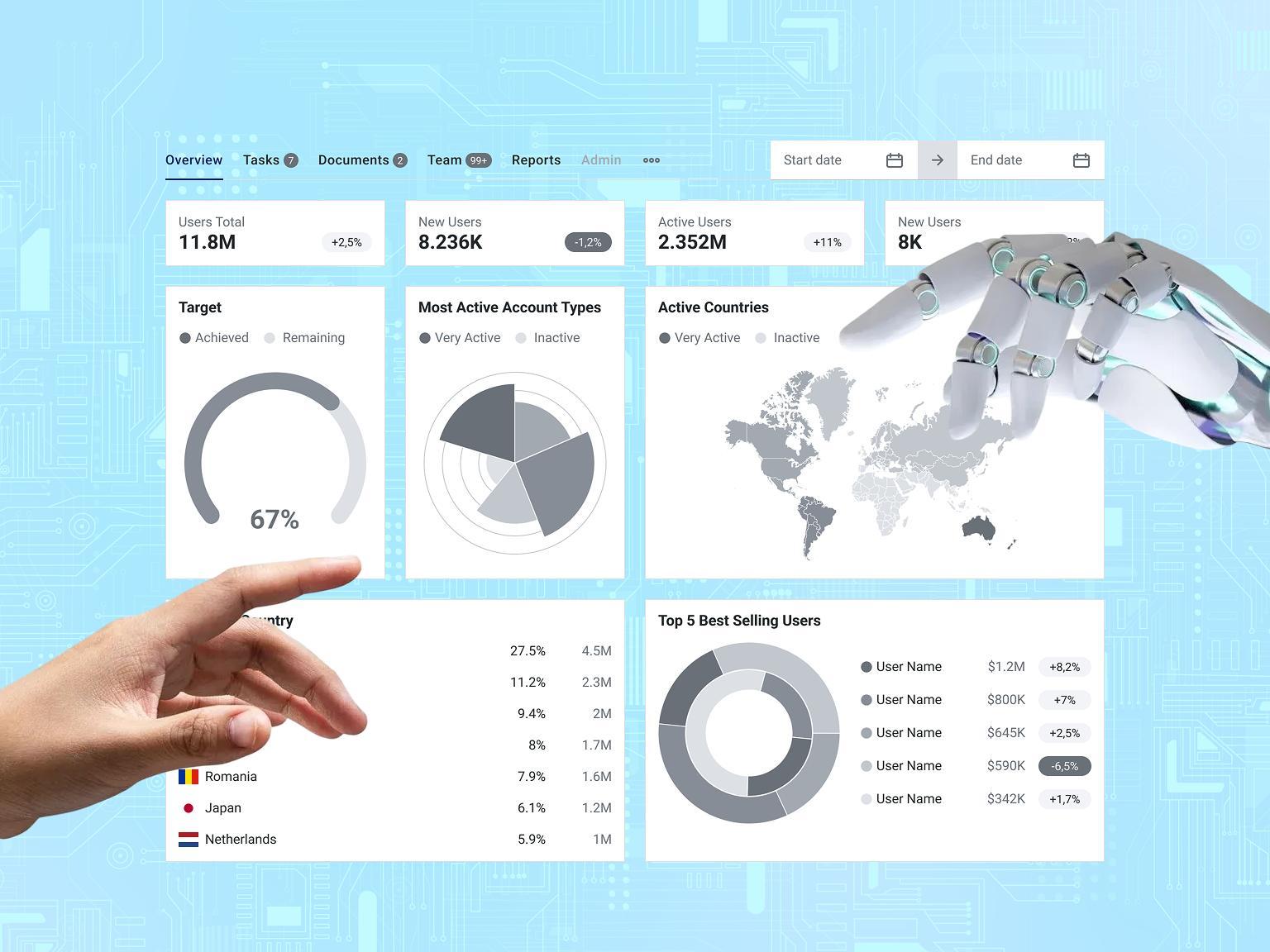

Our Cases

Let’s explore three distinct case studies, each showcasing the transformative impact of implementing automated testing in diverse project environments.

Case #1

Project

An educational platform specializing in internet security offers practical learning scenarios across various expertise levels in cybersecurity.

Results

- Integration of automated testing within the development cycle;

- Regression testing takes up to 2 hours;

- Boosted test coverage to 90%;

- 70% reduction in production bugs.

Future goals

- Maintain near 100% test coverage;

- Enrich testing scenarios based on user interactions;

- Regular Review and Maintenance;

- Refactoring and Cleanup;

- Continuous Review and Feedback Loop;

- Adapt to Application Changes.

Description

The initiative to implement automated testing stemmed from the lack of a dedicated QA team. This integration, which took place during the development phase rather than at the outset, aimed to ensure consistent functionality and enable developers to swiftly address any issues introduced. The primary goals were to establish thorough documentation for testing processes and achieve approximately 90% test coverage.

The introduction of automated testing in this project entailed several stages. The initial phase involved integrating testing into the existing development workflow, followed by efforts to attain the target of 90% test coverage. A significant challenge was continuously updating the tests in line with the rapidly evolving features of the platform.

As a result, the project successfully reduced the incidence of production bugs by 70% and markedly decreased testing times. Future objectives include maintaining test coverage near 100% and enriching testing scenarios based on user interactions. A particular challenge for this platform was its multitude of client-specific configurations, which complicated manual testing.

Automated testing proved crucial in efficiently managing these complexities, ensuring thorough and faster test coverage.

Case #2

Project

An ERP solution for the oil and gas industry, aiming to enhance operational efficiency and resource management.

Results

- QA costs cut by 75% with automated testing;

- Decreased regression testing from 8 hours to 2 hours;

- Achieved 80% functionality coverage with automation;

- Shifted focus from repetitive tasks to innovation.

Future goals

- Increase % of autotest coverage.

Description

The impetus for implementing automated testing stemmed from a need to expedite pre-release checks and ensure early detection of bugs. This integration, which occurred mid-project after the primary features had been manually tested and stabilized, was focused on improving overall product quality and efficiency.

The objectives were multifaceted: reducing time spent on testing, optimizing user flow on the platform, relieving the team from repetitive tasks, and thereby allowing more focus on developing new edge cases in the existing functionality. Emphasis was also placed on documentation and achieving a synergistic balance between automated and manual testing.

The implementation process entailed several critical steps, starting with identifying key user flows and prioritizing test cases. A notable challenge was in developing a test suite that was not only comprehensive but also efficient enough to be integrated seamlessly into the deployment process. Initially, all tests were included in the deployment, leading to time-consuming processes, which necessitated a more streamlined approach.

This shift in strategy resulted in significant achievements: test coverage increased to 80% of all functionalities, regression testing time was cut down from 8 hours to 2 hours, and there was an overall improvement in the quality of manual testing.

Future plans include continuous enhancement and extension of the automated test suite to cover new product functionalities. The project's primary challenge was the realization that not all functionalities could be automated, requiring a strategic approach to maximize testing coverage efficiently and effectively.

Case #3

Project

A SaaS platform specifically designed for clinics and their patients.

Results

- Efficient testing process ensuring reliable SaaS performance

- Reduced QA regression testing from 2 days to half a day;

- QA costs slashed by fourfold with strategic automation.

Future goals

- Increase % of autotest coverage.

Description

This platform's primary goal is to improve the efficiency of clinical processes, enhance the quality of patient care, and optimize healthcare management. The solution provided by this platform addresses the intricate needs of healthcare providers and patients, ensuring streamlined operations and better healthcare outcomes.

The process of implementing automated testing in Case 3 was identical to that in Case 2, involving the strategic integration of automated testing after the core features had undergone manual testing and stabilization. This approach was critical in enhancing the overall quality of the product and ensuring a more efficient testing process.

The successful implementation of automated testing brought about substantial improvements: it drastically reduced QA regression testing time from 2 days to just half a day and significantly lowered QA costs by four times.

These results highlight the effectiveness of automated testing in a complex healthcare SaaS environment, not only in saving time but also in reducing operational costs.

How to Prepare for the Transition from Manual to Auto - Yulia’s Recommendations:

1. Proactive Learning and Training

Yulia gives insight into the necessity of the QA team proactively acquiring automation skills through extensive learning and structured training programs. She believes in the implementation of formal training, interactive workshops, and persistent practice to build the required competency. A well-informed and skilled team is viewed by Yulia as the backbone of a successful transition, emphasizing the importance of fostering an environment that encourages learning and skill enhancement.

2. Structured Migration Plan

Yulia’s insights highlight the importance of a well-organized migration plan. She advises starting with the automation of simple, high-impact test cases, gradually progressing to the more intricate ones. This approach allows the team to steadily develop confidence and refine their expertise. Yulia believes that a structured and phased approach ensures that the transition is manageable and the team isn’t overwhelmed by the complexities of automation from the start.

3. Investment in Infrastructure

A robust and versatile infrastructure is crucial for integrating automated testing. Yulia sheds light on the fundamental role of setting up Continuous Integration / Continuous Deployment (CI/CD) pipelines. The inclusion of CI/CD pipelines is integral to the automation infrastructure, and Yulia notes that it doesn’t significantly augment the overall costs.

4. Choosing the Appropriate Tools

The selection of suitable and efficient tools is pivotal in automation. Yulia provides insights into preferring user-friendly solutions like Cypress for their simplicity and effectiveness in writing complex tests. She values Cypress for its user-centric design, enabling teams to navigate the intricacies of automation seamlessly. However, she also warns about the steep learning curve of more complex tools like Selenium for beginners.

5. Continuous Evaluation and Improvement

Yulia emphasizes the critical role of periodically evaluating and refining the automation strategy. She insists on continuous assessment to identify areas for potential enhancements and optimizations. Regular refinement of test cases and automation scripts is, according to Yulia, essential to adapt to evolving requirements and maintain optimal coverage and efficiency.

6. Robust Support and Collaboration

Effective communication and a supportive management structure are vital components in the transition phase. Yulia highlights the significance of close collaboration between development and QA teams and robust support from management. She considers robust support and seamless collaboration as linchpins that bolster the transition process, fostering an environment conducive to innovation and excellence.

Conclusion

Automated testing, while demanding a higher initial investment and learning curve, emerges as a crucial component for large-scale, complex projects. You can significantly reduce costs while still nailing the quality.

On the other hand, manual testing remains irreplaceable for assessing user experience and conducting exploratory testing in smaller scales.

At JetBase, we can assess the most effective testing option at each stage of testing depending on your project. If you are interested in how to reduce the cost of testing on your project, then you can contact us by sending a form in response.